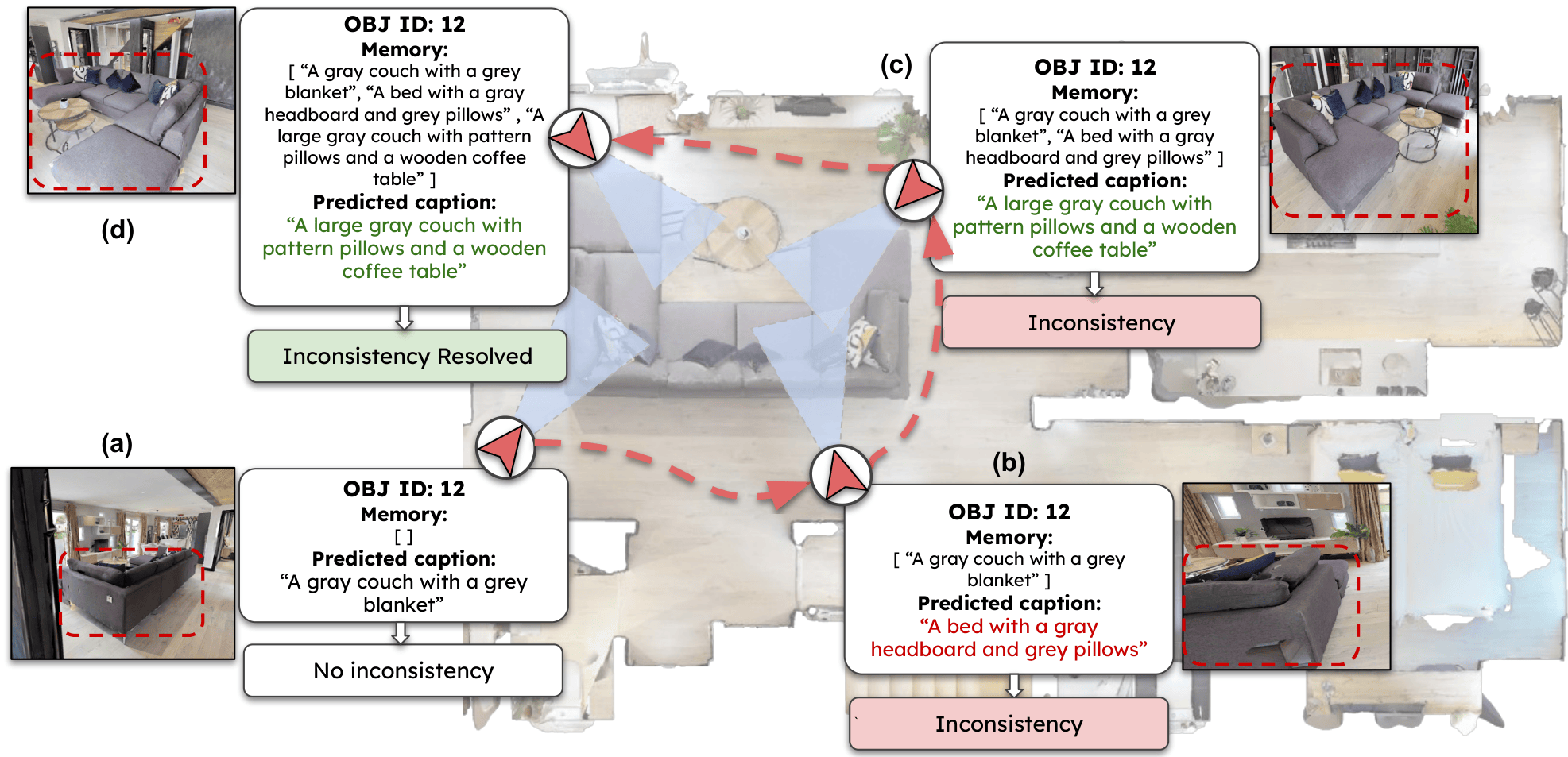

Memory-driven multi-view exploration resolves ambiguous captions into a consistent object-level description: (a)–(b) inconsistent predictions across views; (c)–(d) consistency using episodic object memory.

Abstract

Vision–Language Models (VLMs) often yield inconsistent descriptions of the same object across viewpoints, hindering the ability of embodied agents to construct consistent semantic representations over time. Previous methods resolved inconsistencies using offline multi-view aggregation or multi-stage pipelines that decouple exploration, data association, and caption learning, with limited capacity to reason over previously observed objects. In this paper, we introduce a unified, memory-augmented Vision–Language agent that simultaneously handles data association, object captioning, and exploration policy within a single autoregressive framework. The model processes the current RGB observation, a top-down explored map, and object-level episodic memory serialized into object-level tokens, ensuring persistent object identity and semantic consistency across extended sequences. To train the model in a self-supervised manner, we collect a dataset in photorealistic 3D environments using a disagreement-based policy and a pseudo-captioning model that enforces consistency across multi-view caption histories. Extensive evaluation on a manually annotated object-level test set demonstrates improvements of up to +11.86% in standard captioning scores and +7.39% in caption self-similarity over baseline model, while enabling scalable performance through a compact scene representation.

Motivation

Humans build stable object understanding through embodied exploration: by moving, revisiting objects, and integrating observations over time, they form consistent semantic representations. In contrast, vision–language models trained on static image–text pairs treat each frame independently, often producing inconsistent descriptions of the same object as the viewpoint changes. This semantic drift breaks object identity over time and limits their use in embodied settings.

To address this, we propose a unified, memory-augmented approach where perception, memory, and action are jointly modeled, enabling agents to actively select informative viewpoints and maintain consistent object representations across time.

EPOS-VLM

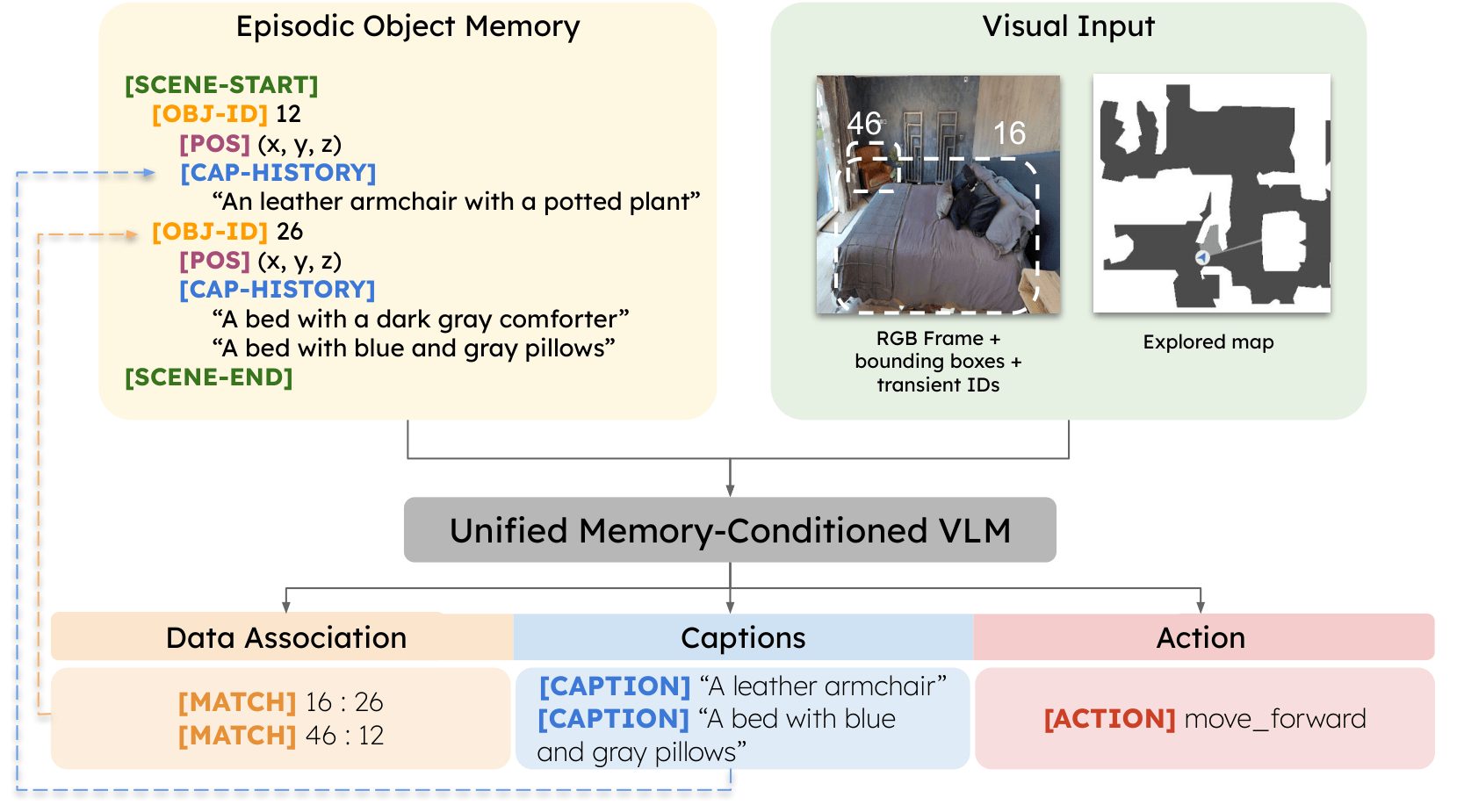

We introduce EPOS-VLM (Embodied Persistent Object Semantics): a single memory-conditioned policy that explores 3D scenes and builds consistent language representations per object. The model conditions on the current RGB frame (with detected objects), a top-down explored map, and tokenized episodic memory (per-object caption histories and 3D positions). In one autoregressive pass it predicts data association (linking detections to persistent IDs or new objects), object-level captions, and navigation actions. Training uses self-supervised pseudo-captions from a 3D-aware aggregator (3D-CPS), with data collected under a disagreement-based exploration policy in Habitat (HM3D / Gibson).

Contributions

- A unified, memory-conditioned vision–language–action model for embodied captioning that jointly learns data association, object-level captioning, and action prediction.

- Structured tokenization of episodic object memory so a pretrained VLM can reason over long-horizon object histories end-to-end.

- An embodied captioning dataset with navigation trajectories and object-level pseudo-captions, plus a manually annotated object-level caption benchmark.

Architecture

EPOS-VLM is built on a pretrained Qwen3-VL-2B backbone. Visual inputs are the RGB observation (with instance boxes and transient IDs) and the explored map, resized and stacked into a single image; episodic memory is serialized with special tokens ([SCENE-START], [OBJ-ID], [CAP-HISTORY], etc.) and prepended to the language prompt. The model autoregressively decodes [MATCH] tokens (linking frame IDs to memory or NEW_ID), object-level captions, and a discrete navigation action. The diagram below summarizes the full pipeline.

Overview of EPOS-VLM: memory, visual inputs, and unified outputs for association, captioning, and action.

Results (highlights)

On HM3D, EPOS-VLM improves over strong VLMs and prior embodied captioning pipelines on standard caption metrics and on cross-view semantic consistency (SBERT cosine similarity between captions of the same object). A without-memory ablation drops performance sharply, showing that episodic memory is central. Compared to a dense point-cloud association baseline, EPOS-VLM achieves similar association quality with much lower inference time and memory thanks to fixed-capacity tokenized memory. An exploration ablation (random goals vs. frontier vs. EPOS-VLM policy) shows that action choice matters for consistency—not only aggregation.

Object-level captioning and consistency (HM3D manually annotated test set). Accuracy metrics: higher is better. Mean CS / Median CS: higher is better. IQR: lower is better.

| Model | B4 | M | RL | CI | SP | CS | Mean CS | Median CS | IQR |

|---|---|---|---|---|---|---|---|---|---|

| Qwen3-VL | 16.00 | 15.97 | 41.15 | 0.84 | 29.88 | 60.01 | 59.65 | 60.01 | 29.12 |

| BLIP-2 | 10.99 | 14.51 | 39.99 | 0.57 | 26.16 | 56.88 | 60.15 | 64.12 | 25.29 |

| Intern-VL | 17.21 | 16.87 | 46.02 | 0.98 | 30.02 | 61.18 | 55.19 | 58.76 | 27.34 |

| CoCa | 15.23 | 16.13 | 43.33 | 0.83 | 29.95 | 57.47 | 57.87 | 57.26 | 29.01 |

| Florence | 14.19 | 17.26 | 44.03 | 0.78 | 31.45 | 59.12 | 52.56 | 55.00 | 26.98 |

| Galliena et al. (ICCV’25) | 19.02 | 23.04 | 48.02 | 1.12 | 39.78 | 70.00 | 81.98 | 83.07 | 8.87 |

| EPOS-VLM | 25.86 | 27.89 | 59.88 | 1.87 | 41.82 | 77.12 | 89.37 | 90.32 | 2.79 |

| EPOS-VLM w/o memory | 16.65 | 18.01 | 45.34 | 0.85 | 27.67 | 64.62 | 52.12 | 52.84 | 32.01 |

B4: BLEU-4; M: METEOR; RL: ROUGE-L; CI: CIDEr; SP: SPICE; CS: cosine similarity between SBERT embeddings of prediction and reference caption. Mean CS / Median CS: cosine similarity between SBERT embeddings of captions for the same object across views. IQR: interquartile range of pairwise cosine similarities (lower = more consistent).

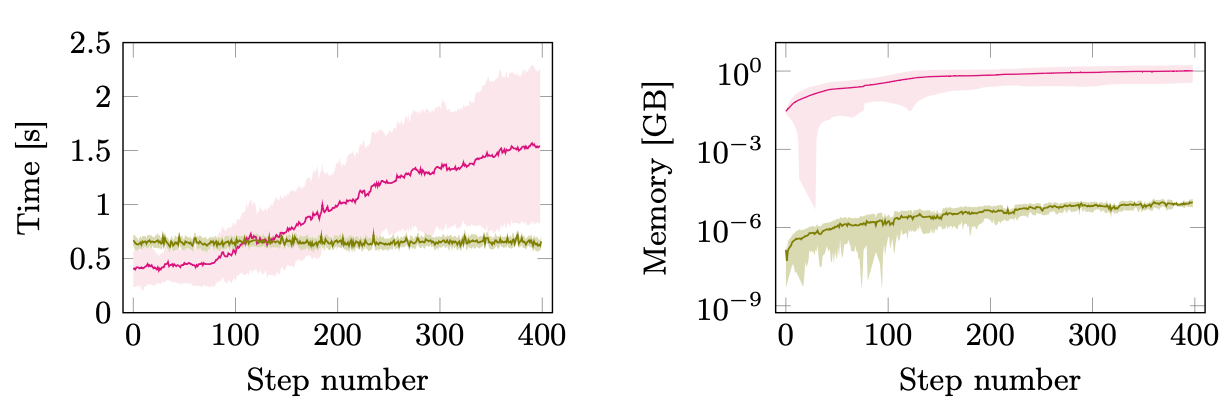

Inference time and memory. Compared to a dense point-cloud association baseline, EPOS-VLM maintains near-constant per-step wall-clock time and a small, fixed-capacity memory representation, while the baseline’s cost grows over long episodes. The plots below show mean and standard deviation over HM3D test (400 steps).

Per-step inference time (left) and memory footprint (right, log scale), averaged over HM3D test episodes: EPOS-VLM vs. dense point-cloud baseline.

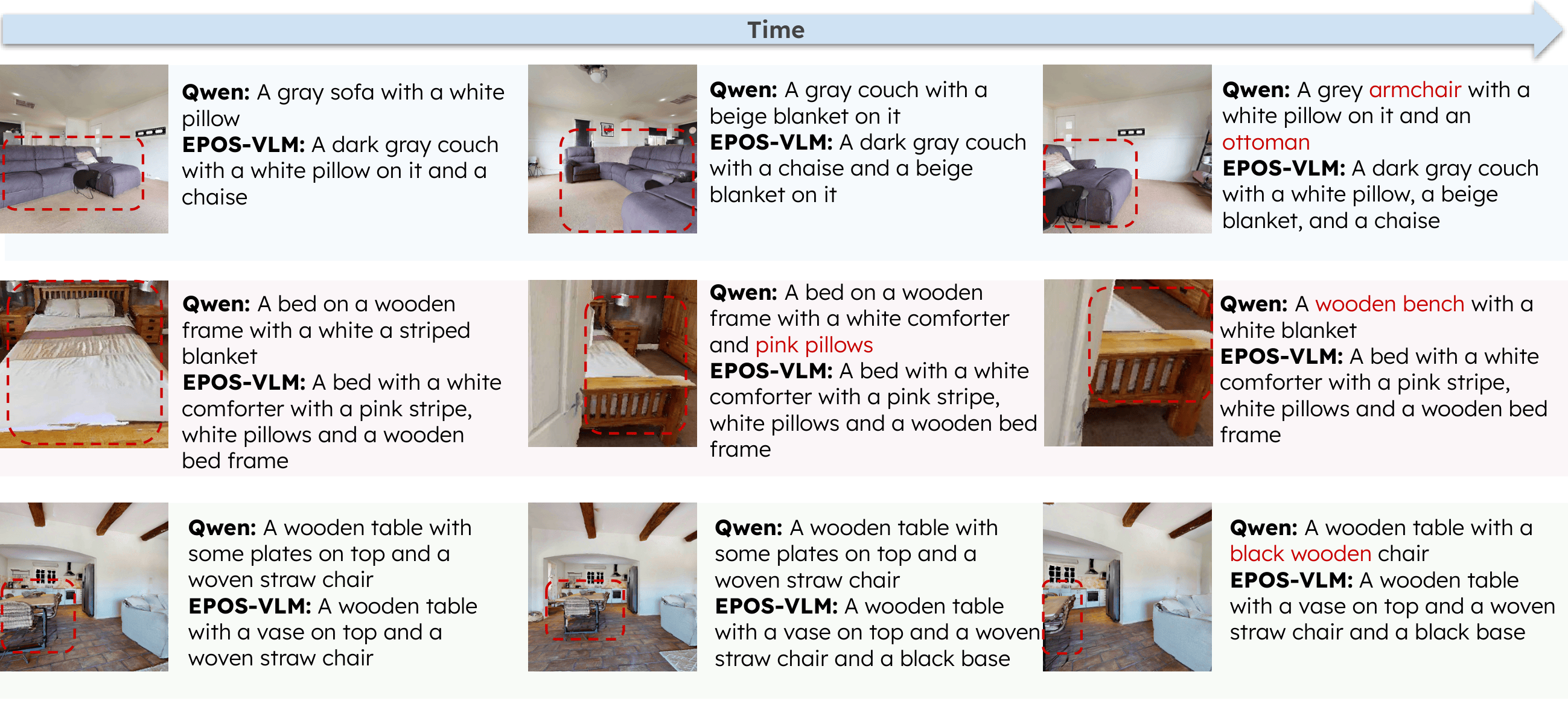

Qualitative comparison. Over time along the same trajectory, Qwen3-VL produces view-dependent captions for the boxed object (sofa vs. armchair, bed vs. bench, shifting table/chair details); mistakes are highlighted in red in the figure. EPOS-VLM keeps a stable object-level description by conditioning on episodic memory and association.

Caption comparison along exploration trajectories: Qwen3-VL (independent per-frame predictions) vs. EPOS-VLM (memory-conditioned). Red text marks Qwen inconsistencies.

Test-set trajectories

Combined trajectory visualizations from EPOS-VLM rollouts on HM3D test scenes (one panel per episode).

Conclusion

EPOS-VLM shows that persistent object memory and end-to-end training of captioning, association, and actions can substantially improve object-level caption accuracy and viewpoint consistency for embodied VL agents, with efficient inference compared to dense 3D representations. Limitations include reliance on an external segmenter and evaluation in simulated, static environments; future work includes tighter integration of perception, real-world deployment, and dynamic scenes.

BibTeX

@misc{galliena2026memoryaugmentedvisionlanguageagentspersistent, title={Memory-Augmented Vision-Language Agents for Persistent and Semantically Consistent Object Captioning}, author={Tommaso Galliena and Stefano Rosa and Tommaso Apicella and Pietro Morerio and Alessio Del Bue and Lorenzo Natale}, year={2026}, eprint={2603.24257}, archivePrefix={arXiv}, primaryClass={cs.CV}, url={https://arxiv.org/abs/2603.24257},}